Using subdomains as a basic structural element isn’t anything new. It’s helped Heureka acquire an almost legendary reputation. More than 10 years have passed now since the creation of our website. Time has moved on. Online technologies are moving forward at break-neck speeds. The basic conditions for survival in the jungle that is the internet shifts every day, either because of changes by developers, search engine updates, or the activities of webmasters at competing websites. Panta rhei.1 Everything flows and is subject to unavoidable changes in the relentlessly evolving world of cyberspace.

It’s useless to hide behind legends, SEO myths, and senseless fiction. We live in a world where disinformation and propaganda are on the rise and should be combatted. We therefore decided to expose the innards of one of the most popular Czech websites and hope this will contribute to educating the public and to dispel many false claims and „subdomain myths“.

A Quick Tangent About Terms

Within this text, the term “subdomain” is used for a third-level domain (elektronika.heureka.cz). The term “domain” is used for second-level domains (heureka.cz). The term TLD (Top Level Domain) cannot be interpreted differently. And subdomains of lower levels are always numbered, i. e.“fourth-level domain” (test.elektronika.heureka.cz).

The Subdomain as the Bringer of Business Success

We must dispel one of the most common myths right at the beginning. The long-term success of Heureka and subdomains are not particularly related. Companies and websites are successful thanks to their competent management, the selection of the right strategies, top-notch services, exceptional products, a strong ability to compete, and so on. On the contrary, companies and websites are not made successful because of valid HTML code, the use of subdomains or subfolders, an informal dress code, or even clean toilets.

Clean code and clean toilets are the results of human activity that is dependent on various factors, such as hiring a capable developer and an effective cleaner and giving them the appropriate working conditions. Positive effects could come under the right conditions, and these could spill-over into other processes and contribute to the company’s success.

Replicating a certain process or result without a detailed knowledge of all circumstances usually results in failure. Thinking that subdomains are a guarantee of success and trying to copy that method is the same as cleaning toilets without any cleaning products.

The details you don’t usually see are that the cleaner uses a mop rinsed in a bucket of clean water and detergent.2 The result is a clean toilet. The result of unqualified repeated activity without regularly changing the water and using the appropriate cleaning products will be a room that most people won’t want to enter.

Without detailed knowledge of the entire process and the real background, you don’t even have the chance to understand the less attractive and riskier parts of reality. Are you just copying an ingenious strategy, or creating a well-camouflaged disaster? Subdomains can be just a bucket full of nasty shit from Satan himself that many admire and consider to be crystal-clear water. Success, simply put, doesn’t come from subdomains. Period.

A Short History of Heureka’s Subdomains

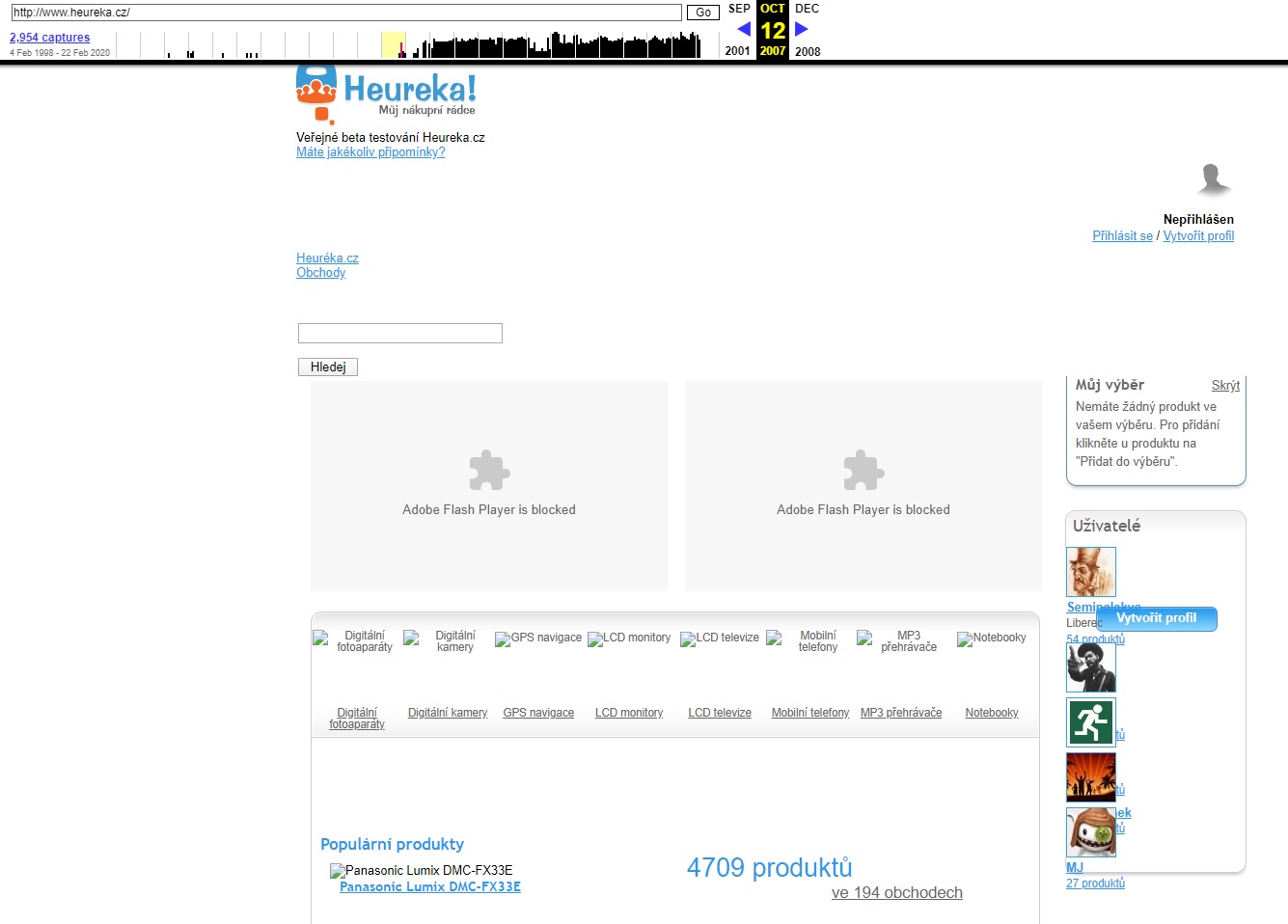

Heureka was founded in 20073 and used subdomains to build its category tree from the very beginning. There were about 30 subdomains in the public beta, many of which are still used today, such as elektronika.heureka.cz, notebooky.heureka.cz or mobilni-telefony.heureka.cz.

After the end of beta and launch of Heureka into full operation, the category tree gradually began to grow, expanding to about 2,500 categorical subdomains, the latest of which include roboticke-vysavace.heureka.cz or auta.heureka.cz.

Another fundamental addition came in 2009. Heureka started to use brands to a greater extent and created „brand corners“ that are subdomains dedicated to specific brands.

At the time, sony.heureka.cz and nikon.heureka.cz seemed like a logical move. However, it wasn’t well thought out technically nor strategically and proved to not be effective over the long term. Over the years, some 60,000 brand subdomains were created.

What we call the „parameter section“ was the last addition in 2010. They were created by a new non-standard approach where the foundations for these sections were categories with a specific combination of filters selected (i. e. mobilni-telefony.heureka.cz/f:1666:101069/) that were moved into a specialized isolated system of a new type of subdomain (i. e. iphone.heureka.cz). The result was the last level of the category tree that was created by copying part of the website from a higher level of the tree. The final number was some 1,000 parametric subdomains.

The following years were positive, and no other fundamental type of subdomain were created. The only exception is the creation of a mobile version of the website in 2013 (when it was updated from its 2009 WAP version).

Review of Subdomains in 2018

- Basic, systemic, mobile, and external subdomains (

www,m,blog,info,darky): about 20 subdomains. - Categorial subdomains (

notebooky,mobilni-telefony,elektronika): about 2,500 subdomains. - Brand subdomains (

sony,nikon,apple): about 60,000 subdomains. - Parametric subdomains (

herni-notebooky,android-telefony,xbox-360) about 1,000 subdomains.

We don’t know the exact total, but it’s around 65,000 subdomains. Is that a nice foundation for high-quality SEO, or for a huge number of problems?

Postulates of Chaos

Using subdomains as a basic building block was a stroke of genius in a way. Theoretically, it offers the option of creating a clearly defined and controlled environment. Subdomains help create delimited thematic (eco)systems. However, at no point in Heureka’s history were these closed and controlled environments. Quite the contrary, actually.

The complexity of the website grew during the first few years into a state that wasn’t sustainable and could no longer be analysed with tens of thousands of subdomains and hundreds of billions of URLs. Sometimes very strongly connected through logical links. However, sometimes it was a bottleneck that prevented effective ranking and crawling. Moreover, „tumours“ in the form of gigantic spider traps of massive self-replicating duplicities and thin content grew on the periphery of the system.

Altogether, we’re talking about a total number of URLs that reaches over a sextillion: 20^21, or 1,000,000,000,000,000,000,000 URLs.4 It could be a few orders of magnitude more, and there’s no point in counting an exact number because a sextillion is well beyond the limits of sanity.

Every website has the potential to be infinite. From a certain point of view, each subdomain is its own website. When there are 65,000 subdomains, there are 65,000 disasters in waiting with the potential to expand infinitely and uncontrollably.

Discreet Website Models

There are many ways to reduce potential problems to a minimum on a website. One of the goals of a good webmaster should be limiting the autonomous growth of the website in areas that could expand infinitely, which requires extreme thoroughness, attention, and a rather deep knowledge of a number of areas.

For a quick example, let’s look at the well-known cellular automaton5 Game of Life.6 Fascinating and complex 8-bit life is created based on four insignificant rules. The advantage of experimenting with cellular simulations is the ability to limit space and the length of their existence.

However, there are many more basic online rules that must be defined. Time and space have extreme limits. There are dozens or even hundreds of potential variables, states, and external influences.

Independent systems can then be created in the subdomain world and live their own lives. It must be said that it doesn’t matter whether we are discussing files or domains on lower levels. These problems can arise anywhere but are regularly harder to detect in lower-level domains because we’re looking for atypical patterns of behaviour in subsystems that have their own unique set of rules more often than normal for directories.

Extreme Technical Limits

Unfortunately, normal online systems will never be discreet7 models. Cyberspace is a constantly changing, dynamic environment. Thankfully, at least the existence of websites and domains has some boundaries, primarily related to the limits of the Domain Name System (DNS), browsers, search engines, and various protocols.

Example of Browser and DNS Limits

- The maximum length of a domain name is 255 characters.8

- The maximum length of a domain label is 63 characters.9

- The maximum number of potential lower level domains is 127.

- Sitemap protocols set the number of characters in the attribute to less than 2,048.10

- The maximum length of a URL in the Internet Explorer address bar is 2,083 characters and the maximum path in the URL must be less than 2,048 characters.11

- The generally recommended and optimal URL length is less than 2,000 characters.

There are various limits across platforms. For example, Chrome should be able to handle up to 32,779 characters in the address bar, but it’s generally recommended to maintain the URL length under 2,000 characteristics as noted above. That is the most universal and safest solution.

The important thing is that there are some limitations. Therefore, there is a relatively limited number of valid and processable URLs, which is at least a bit of positive news.

Tool Limits

Webmasters need tools to have greater control over their websites, which have their own limits. The Google Search Console (GSC) is one of the most fundamental diagnostic tools.

Up to 2015, GSC limited accounts to 100 properties. With Heureka’s 65,000 subdomains, that meant we would need about 650 accounts that we would connect using APIs in another tool like Keboola.12

That limit was changed to 1,000 properties in 2015, which means only 65 accounts would be necessary to cover all of Heureka.

A partial reprieve came in 2019 in the form of GSC properties at the domain level.13 We finally had data available for the entire website. However, it still wasn’t possible to filter at the level of subdomains and we still had to create independent properties for the most important ones. And that makes it impossible to compile subdomain details into logical data units and analyse them.

Google Analytics also isn’t an attractive tool for subdomains. They can be evaluated, but there are basic problems with evaluating larger logical units, such as all subdomains that fall under the photo.heureka.cz category. That can be resolved using something like Content Grouping, but the fundamental prerequisite is correctly and thoroughly implementing the correct analytics configuration.

The last thing is access logs and Kibana. But even here it’s not possible to evaluate anything concerning subdomains. The inability to use traditional regexes is damaging. Lucene14 and many other integrated query languages (DSL, KQL, RexeP) aren’t appropriate for the creation of more complex queries.

By using subdomains, we limited ourselves over the long term in many ways. Detailed analytics were impossible. We could only work with a fraction of the data, and it was impossible to have adequate control over the website.

SEO on the Edge of Chaos

After a time, „random“ problems started to appear on the website at irregular intervals. Small and seemingly innocuous development changes and lax setting of the website’s rules caused extensive chain reactions that entered a cycle of potentially infinite numbers of iterations.

In a way, we were blind to existing problems because of imperfections in analytics and the absence of complete data from Google Search Console, we were blind in a certain way to many existing problems. We reached the so-called „edge of chaos“.15

As was suggested, websites are not discrete systems and are influenced by many external factors. But they also aren’t fully stochastic16 and infinite systems. At their core, websites are subject to a set of relatively simple rules and patterns of behaviour. The task of a webmaster is to know these rules and how to set them correctly.

In practice, these are often various fail-safe mechanisms17, such as automatic redirects from HTTP to HTTPS, taking care of slashes at the end of the URL, and so forth.

Over time, the gradual minimization or ideally the elimination of uncontrolled (chaotic) elements makes the website an entity managed by firmly set rules. Internal changes thus become more predictable, countable, and measurable.

Cutting into the Undead

Heureka as a whole was changing. A new responsive design was being developed. The old monolithic code was being transferred into microservices. This was an ideal opportunity to change the approach to SEO. Instead of quantity, we decided to go down the path of quality and cut into the rotting mess that had been ignored for years.

Maybe you ask: “Why? It was working. Why change it?” But it wasn’t going to work over the long term. That just wasn’t obvious at first glance. Subdomains reached their ceiling long ago and stalled out. Detailed analyses helped us discover fundamental shortcomings and prove that bigger changes were absolutely necessary. Some parts of the website were more dead than alive. Many were cannibalizing each other in organic and paid channels. It was a crazy cannibal zombie monster running on momentum.

Planning, foreseeing effects, analysis, and validation took almost a year, and even more in some cases. Changing a website’s structure that was built over 12 years isn’t exactly easy. However, it was necessary to act sooner than to fall over the edge of chaos. It’s better to operate on a living being than to revive a corpse and hope at least some life functions will come back online.

Conclusion

Our first somewhat theoretical article is ending, but our series on subdomains is only beginning. The next article titled „Cancer SEOtherapy“ is a detailed introduction on how we surgically removed 1,000 parametric subdomains with a primary focus on practical approaches, in-house tools, and useful tips for webmasters.

Series on SEO and Subdomains

- Satan, SEO, and Subdomains – VOL I. – Crawling Chaos

- Satan, SEO, and Subdomains – VOL II. – Cancer SEOtherapy

- Satan, SEO, and Subdomains – VOL III – Controlled SEOcide

- Satan, SEO, and Subdomains – VOL IV. – One To Rule MFI

- Satan, SEO, and Subdomains – VOL V. – SEOfirot of the Tree of Life

- Satan, SEO, and Subdomains – VOL VI. – Horsemen of the SEOcalypse

Disclaimer

Approach this text carefully. This article and the series in general are not a guide. These texts don’t include any „universal truths“. Each website is a unique system with various initial conditions. An individual approach is necessary, as well as a perfect knowledge of the specific website and the given problems.

The article discusses our website. We aren’t generally evaluating the effectiveness of subdomains and directories. We also don’t recommend any specific solution. Again, this is a very individual matter that is influenced by many factors. The strategy and detailed plans for some of the activities described here were created over the course of a year. Everything was discussed exhaustively, constantly tested, and validated. Please remember that if you decide to do something similar.

Some of the data listed can be imprecise or purposefully distorted. Specific numbers, like organic traffic, revenue, conversion, etc. cannot be published for obvious reasons. The key information, like the number of subdomains, URLs, and our methods are recounted exactly without any embellishment.

The texts can include advanced concepts and models that are not standard in terms of SEO. The articles are thus accompanied by footnotes with sources that explain everything.

Footnotes

Panta rhei: https://en.wikipedia.org/wiki/Heraclitus. ↩

Detergent. Strong chemical compound and solvent used for cleaning. Doesn't have a healing effect! Can't replace vaccination! ↩

About Heureka.cz and Heureka Group: https://heureka.group/cz-en/. ↩

We’re using English-style numbers where „sextillion“ = 10^21: https://en.wikipedia.org/wiki/Orders_of_magnitude_(numbers). ↩

Cellular automaton: https://en.wikipedia.org/wiki/Cellular_automaton. ↩

Game of Life: https://en.wikipedia.org/wiki/Conway%27s_Game_of_Life. ↩

Discrete mathematics: https://en.wikipedia.org/wiki/Discrete_mathematics. ↩

For various URL limits, see paragraph 2. 3. 4 in RFC 1035: https://www.ietf.org/rfc/rfc1035.txt. ↩

Ibid. ↩

On character limits in sitemaps as XML tag definitions: https://www.sitemaps.org/protocol.html. ↩

Characters in the IE address bar: https://support.microsoft.com/cs-cz/help/208427/maximum-url-length-is-2-083-characters-in-internet-explorer. ↩

Keboola: https://www.keboola.com/. ↩

Domain Level in GSC: https://webmasters.googleblog.com/2019/02/announcing-domain-wide-data-in-search.html. ↩

Lucene: https://www.elastic.co/guide/en/kibana/current/lucene-query.html. ↩

Edge of chaos: https://en.wikipedia.org/wiki/Edge_of_chaos. ↩

Stochastic: https://en.wikipedia.org/wiki/Stochastic. ↩

Fail-safe: https://en.wikipedia.org/wiki/Fail-safe. ↩