Regular updates to websites can unfortunately be rather time-consuming, especially when you learn that a Russian hacker stole a blog subdomain hosted on Github. We decided to publish this story including the entire repair process because there is an endless amount of tutorials on how to damage someone else using security holes, but we were unable to find many information about prevention and removing similar problems.

2022 Update Note: The blog was moved from Github to a new CMS and our own servers in March 2021.

Routine Checks

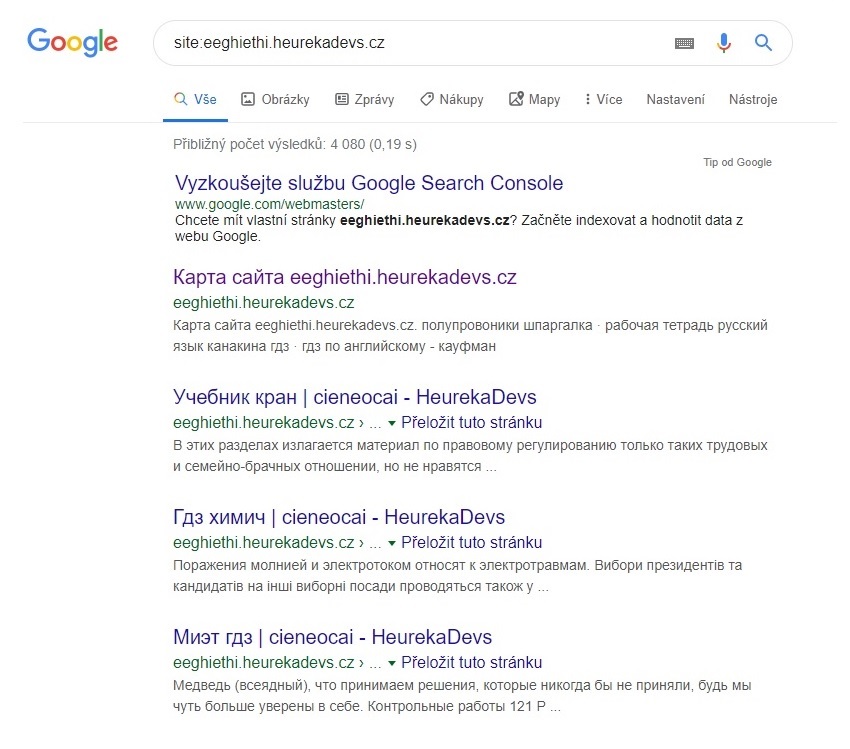

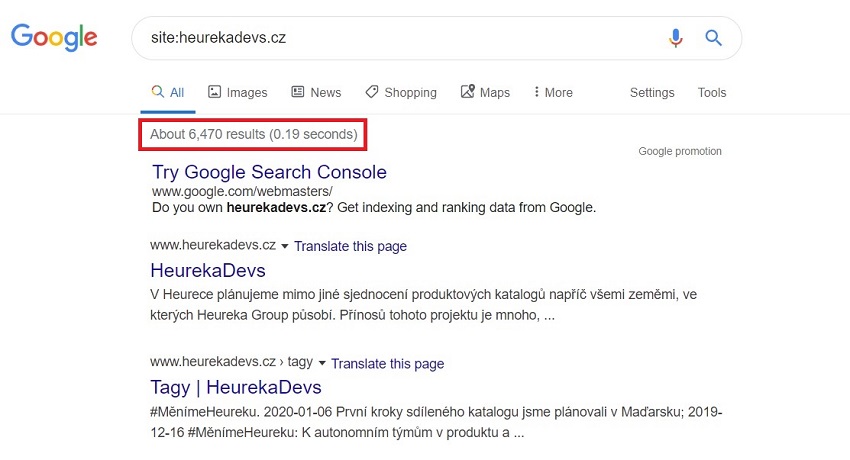

As webmasters, most of our time is invested into Heureka’s main websites. Microsites usually don’t have the potential to generate many problems, so we update them using routine quick checks. One such autumn 15-minute check of the heurekadevs.cz blog upended the apple cart, however. It ended up being a couple of days long, swallowing several hours. The usual move when updating a website is to ascertain the condition of the indexation of the website and the state of the SERP using a SITE operator. We know from web crawler that we should expect around 40-50 results. However, Google showed over 6,000. After clicking a bit on the SERP, we found a flood of Russian snippets from the subdomain eeghiethi.heurekadevs.cz.

We know for certain that no one intentionally created the eeghiethi subdomain. There were thus two potential explanations. The first hypothesis: It’s trash that Google hasn’t disposed of from the previous owner of the domain, but that idea quickly dies because Heurekadevs.cz is a relatively new domain and we were the first owners. The second hypothesis: Someone hacked into the website that we didn’t pay much attention to in terms of SEO. And that proves to be correct.

How to Tackle Hackers

This is a perfect example of subdomain hijacking (or subdomain takeover). We dove into the problem, examining it and documenting our work to acquire the most information we could.

The content on the eeghiethi.heurekadevs.cz website included the email address Mirurokov@gmail.com, which led to the domain mirurokov.ru that appears on several less important blacklists. None of these leads proved to help or provide any useful information.

Googling the phrase “subdomain hijack github” brought fruit, however, as there are dozens of tutorials on how to hijack a subdomain on Github. For example, the article A Guide to Subdomain Takeovers provides a rather detailed method and it includes an important clue that helped find the cause of the vulnerability and a possible patch.

Damn DNS

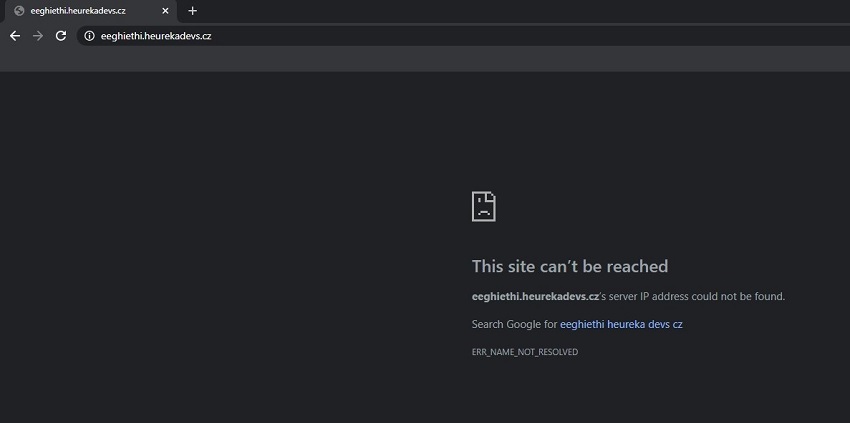

The error was in our DNS records. The entire domain was redirected to Github servers using the wildcard A record *.heurekadevs.cz. It was a tiny mistake made when the blog was created. This record allowed anyone to use Github to create any subdomain under our domain.

All we had to do then was to erase the wildcard record and point only the main domain to Github. This naturally blocked the spam subdomain, and the DNS repair was the last step in the process. All the phases of the process are described in greater detail below.

How to Quickly Check for Vulnerabilities

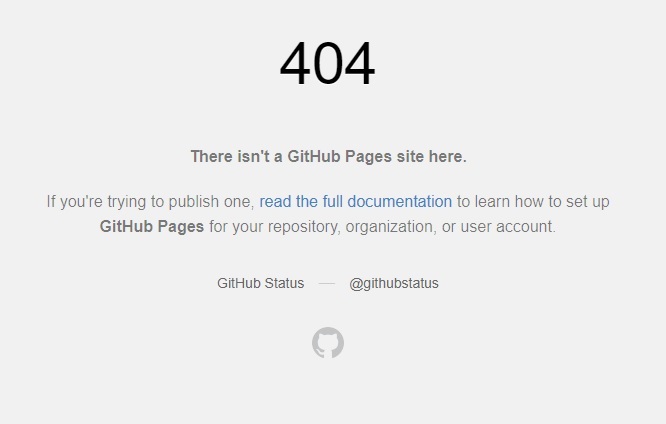

If you use your own domain on Github, then enter the URL anything.yourdomain.cz into the address bar. If you see the same 404 error as on the image below, then there is a very real risk of subdomain hijacking. It’s a common problem. Github now points out the potential risks in their instructions how to direct DNS to their servers. They didn't have that warning in 2020 when this article was first published.

Phases of Repair

1) First, an in-depth analysis of the problem took place, along with documentation, forming hypotheses, and coming up with the first iteration of an optimal solution. This took a short amount of time, and well-documented information can save a huge amount of time in the future.

2) After collecting enough information and data, we needed to determine who is responsible for the area and provide them with all the relevant information to start removing the spam.

3) Using a DNS TXT record, we verified the Domain Level property in the Google Search Console (GSC) for heurekadevs.cz. Confirming the domain gave us full control over the entire website and the ability to create any URL Level properties. We can then freely control the website.

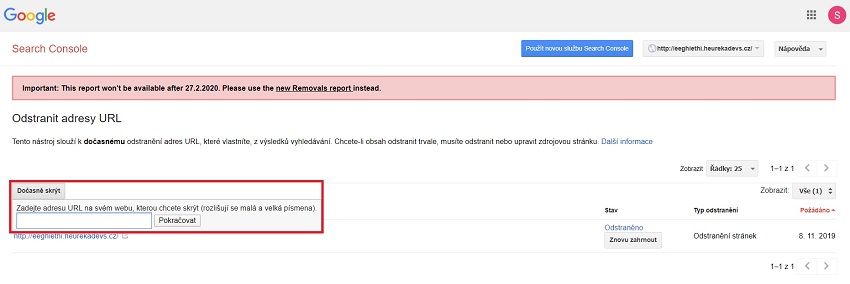

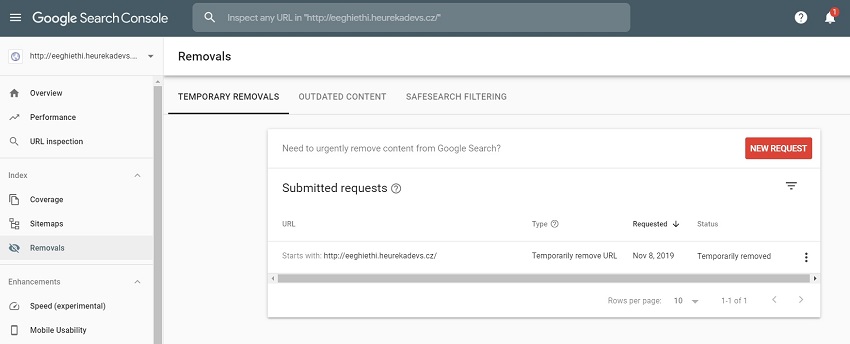

4) We created the URL Level property for http://eeghiethi.heurekadevs.cz in the Search Console. That allowed us to use the URL Removal Tool that temporarily removes a URL, subdomain, or an entire domain from the search results. And that was the option we used. In a few minutes, the spam was completely scrubbed from the search results. You can read more about this tool below.

5) We repaired the A NAME DNS records as was described in the previous part of the article. Why was this the last step and why didn’t it come before steps 3 and 4? That wouldn’t have been a problem because we just wanted to collect some data while the hijacked subdomain was still active in the newly created Search Console.

6) The spam was removed along with its effects and its cause, and subdomains can no longer be hijacked through Github.

The URL Removal Tool

Without any exaggeration, this is a weapon of mass destruction. Using the URL Removal Tool you can erase any website from the Google SERP, albeit only temporarily for 3-6 months. Trillions of addresses can disappear from the internet after just a couple of clicks. The tool is available at https://www.google.com/webmasters/tools/url-removal. A text field appears after clicking on the “Temporarily Hide” button. If the field is not completed, then an entire domain at any level can be removed from the results when continuing to the next step. This is not common knowledge. At the time of this writing, this was a legacy tool from the older version of the Google Search Console. The operation of this tool was halted on February 27, 2020.

The replacement for this tool can now be found directly in the Google Search Console under the Removals tab. This is a significantly enhanced version that allows for more effective administration of the addresses removed. You can find official information about this new feature in the New Removals report in the Search Console section at the official Google Webmasters blog. We didn’t have it available to us in the fall of 2019.

An important note: This and the previous tool are accessible in the GSC only for Owners or Admins with full rights. Therefore, always be careful who you grant the ability to erase your entire website from search results.

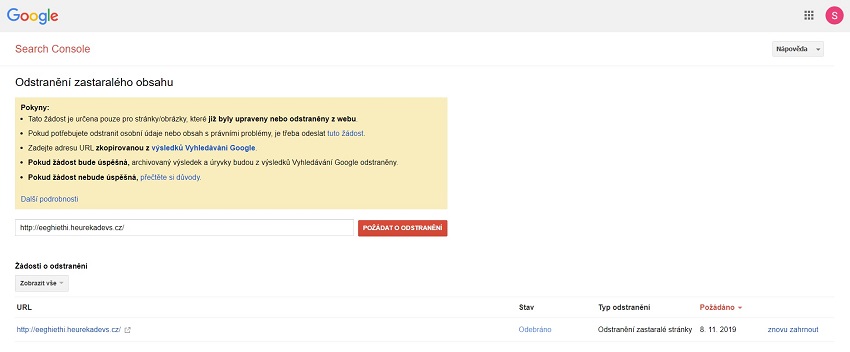

There is also a similar and partially concealed tool at https://www.google.com/webmasters/tools/removals. It primarily serves to remove individual outdated and defunct URLs from search results.

Jekyll and SEO

We used several tools when working on the blog. The Screaming Frog desktop crawler was used to collect data and validate changes. The Google Search Console to acquire control of the website and was used for de-indexing.

And finally, there was Github and Jekyll (a simple generator of small blogs and static websites), because we wanted to immediately repair the errors we discovered – HTTPS, mixed content, basic redirects, internal links, duplicities, etc.

At first glance, the system didn’t seem very SEO friendly, but getting to the world of Jekyll, Liquid Filters, and preparing repairs for all errors took only about three hours in the end. Not that big a deal.

The key for us was that we have to know the abilities of the SEO tools and how to use them in great detail. We must keep our knowledge of DNS, various CMS, coding, programming, website security, and so on at a very high level. That allows us as webmasters to discover problems, partially resolve them on our own, and save developers’ valuable time.

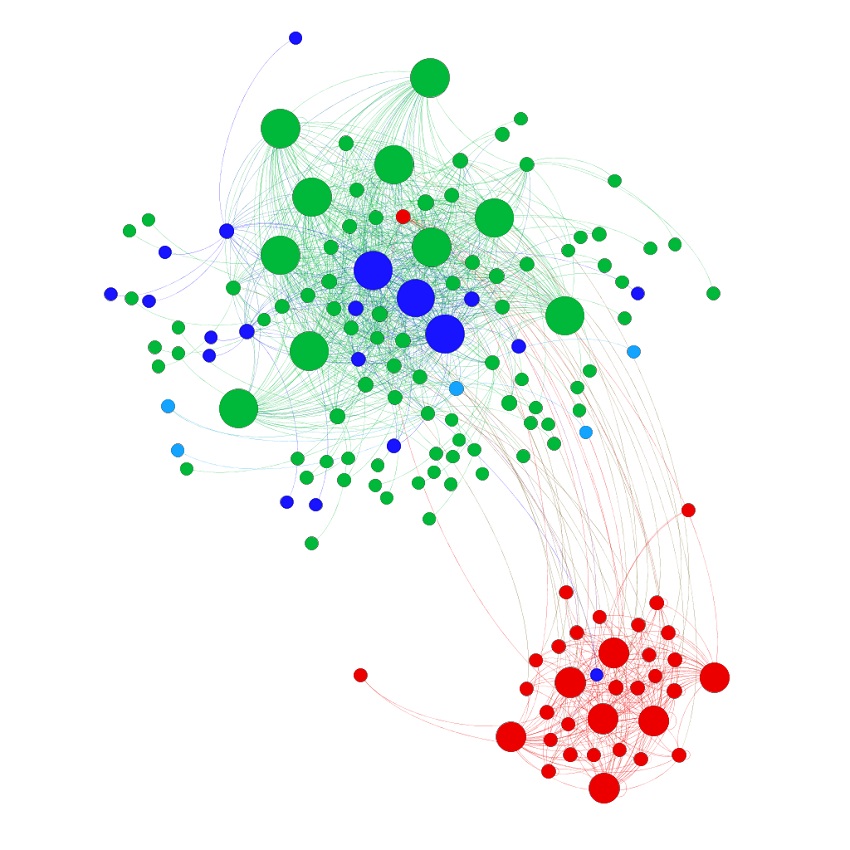

The gif below shows how the web graphs of heurekadevs.cz changed after the removal of the duplicities. Gephi, special software that employs the Force Atlas 2 algorithm, was used to create the animation and the colour coding that is the same as the image above - red nodes are duplicate URLs.

Conclusion

Many weeks have passed since the removal of the eeghiethi spam from the search results. Google still shows an erroneous number of results from the given domain in queries using an operator. That will probably change when the blockage of the subdomain ends, and Google discovers it no longer works. We’re interested to see what happens then ourselves.

2022 Update Note: The previous paragraph was written in 2020. Nowadays, the subdomain is long gone and forgotten.

If you use Github for hosting a website, then be careful how you work with DNS. Create a Google Search Console for the website and learn to use the tool. And be careful who you give full rights to in the GSC. Repair quickly, effectively, and logically, seeking out the root of the problem.

As webmasters at Heureka, we must know how to do our jobs and not rely on developers having the capacity to repair every little thing. We celebrated our success for about a minute. That was all the time we had. Over the two days we dealt with the dev blog, another over 100,000 errors appeared in structured data across other GSC properties. But that is another story.

Here we have to note that at Heureka we approach security dutifully, thoroughly, and without compromises. We’re publishing this small unimportant matter with Github as a fun fail story that can help beginners and less experienced users dealing with similar problems.